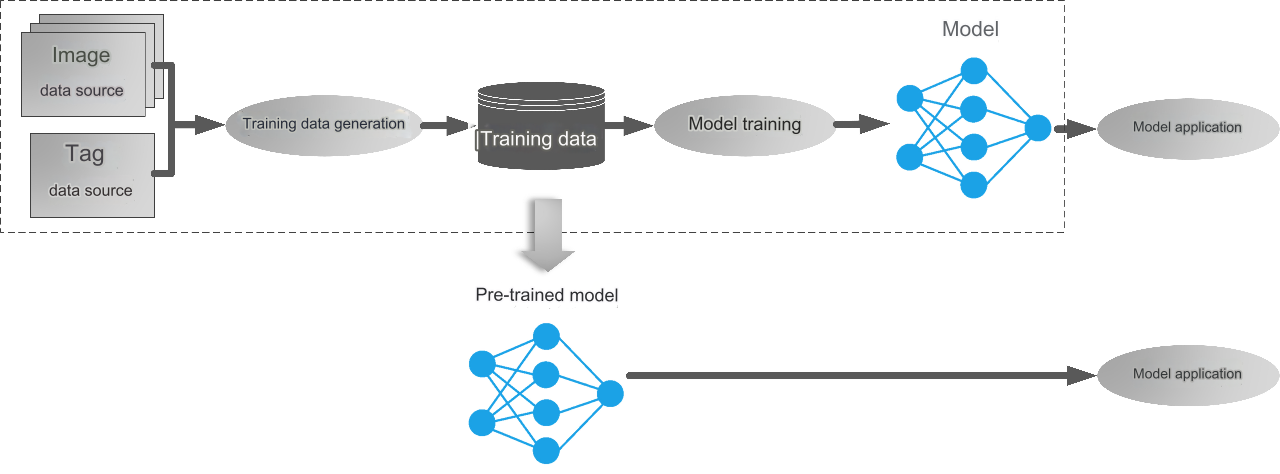

With the advantages of deep learning technology in the field of remote sensing image interpretation, the deep learning models are more and more used in remote sensing image interpretation work. Training an ideal deep learning model requires a large amount of sample data. However, the actual situation is that users often do not have enough sample data to carry out intelligent interpretation of remote sensing, or only have a small amount of sample data, resulting in poor model performance. To solve this problem, pre-trained models are an effective tool. The pre-trained model is a deep learning model trained based on a large amount of high-quality sample data, often targeting specific tasks like extracting specific surface objects (such as cultivated land and buildings), distinguishing specific surface object types (such as land use/cover classification), detecting specific surface object targets (such as aircraft, ships), and detecting changes in specific surface objects (such as road changes).

What pre-trained models does SuperMap provide?

Remote sensing image interpretation pre-trained model

Model training not only requires high-quality data, but also consumes a lot of time and computing resource. In order to reduce users’ costs and satisfy the demands of remote sensing image interpretation, SuperMap provides several commonly used pre-trained models: urban building extraction model, urban water body extraction model, cultivated land extraction model, greenhouse extraction model, aircraft recognition model, ship Identification model. These 6 models can all be used to analyze high-resolution visible light images.

▲ SuperMap remote sensing image intelligent interpretation pre-trained model

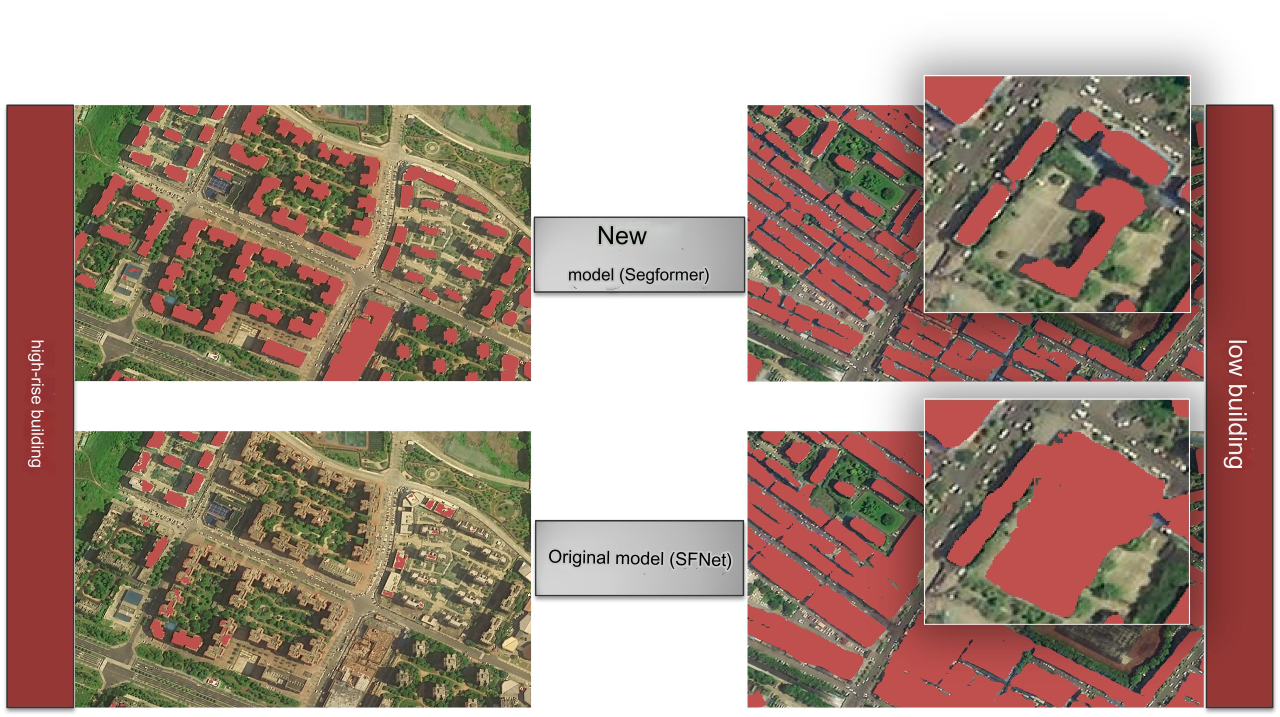

Among them, the urban building extraction model and urban water body extraction model have been optimized in terms of the model structure and training data based on the previous V1 version. For example, the urban building extraction model uses the SegFormer model newly added in the 2023 version. SegFormer is the product of introducing Transformers into semantic segmentation tasks, taking into account effect, efficiency, and robustness. It is a simple, efficient but powerful segmentation framework. Compared with the V1 version trained with the SFNet model, the extraction effect has been greatly improved.

▲Comparison between urban building extraction model V1 and V2

SAM large model

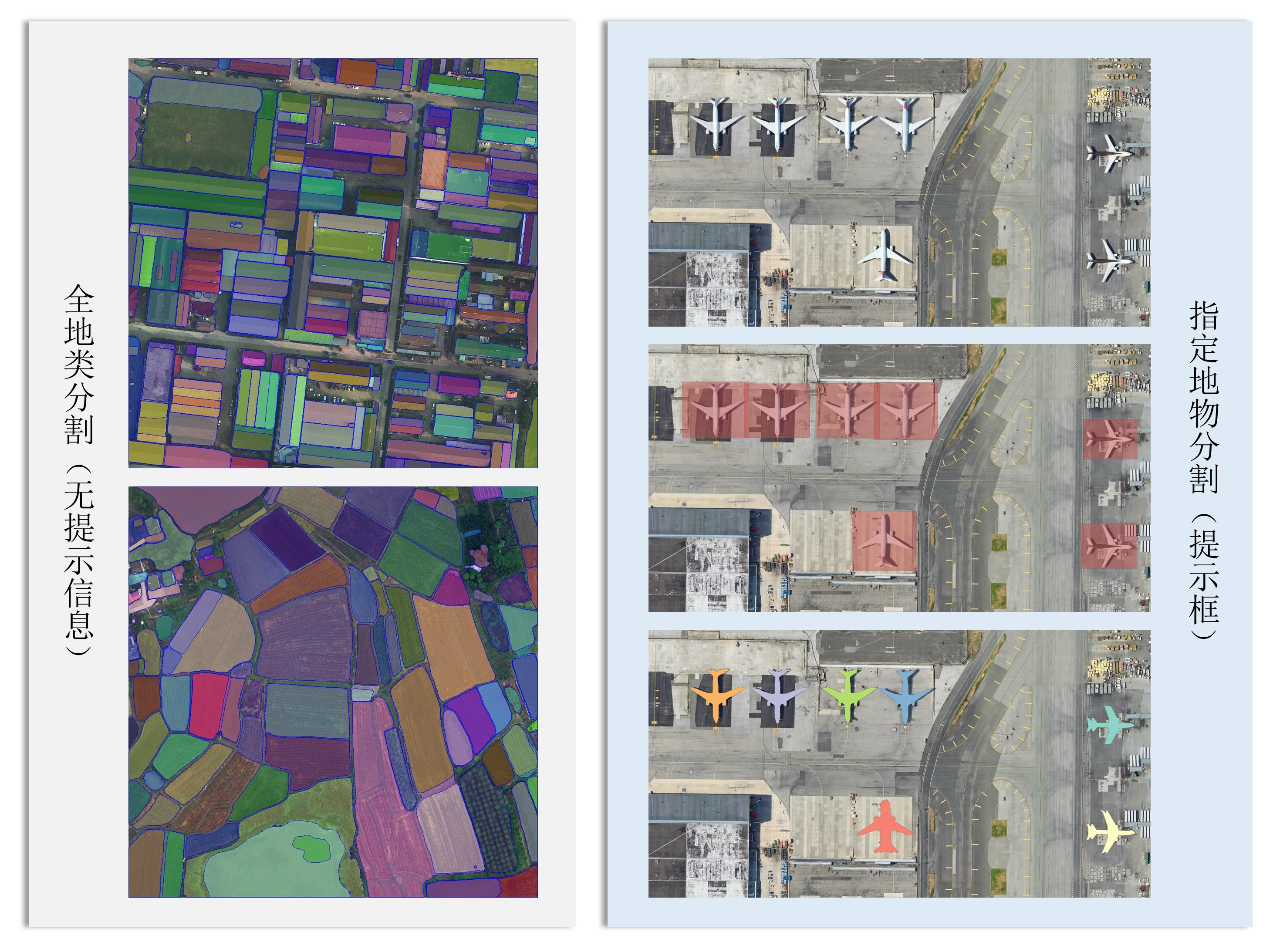

In April 2023, Meta released the Segment Anything Model (SAM), a large computer vision model. The model is trained with over 1 billion labels and can perform one-click segmentation of images, which is the basis for intelligent interpretation of remote sensing.

SAM is training-free with high generalization. However, the model's all-element segmentation of images does not contain semantic information. In addition, its training data is not a data set in the vertical field of remote sensing, and it is difficult to adapt to multiple scales of remote sensing without prompts and interactions. Therefore, SuperMap adopts a target detection + SAM segmentation solution, which uses target detection algorithms such as Cascade RCNN to provide target box prompts, and then uses SAM to generate instance segmentation masks for each target.

▲SAM segmentation plan: segmentation of ground objects throughout the whole area (left) and specified ground objects segmentation(right)

How to use pre-trained model?

Ready to use - no training required to save your labor

For the "pre-trained model", if the application scenario is suitable, we can directly apply it to the inference image, eliminating the complex process of training data preparation, production and model training. You only need to use the corresponding model inference tool, input the image to be inferred and the pre-trained model, and the image can be extracted as the interpretation result. Model inference is implemented in the SuperMap desktop product (SuperMap iDesktopX), component products (SuperMap iObjects Python) and server product (SuperMap iServer). It supports flexible selection of multiple input forms such as single image files, image folders, mosaic datasets, image services, etc., and supports specified range inference.

▲"Ready-to-use" pre-trained model

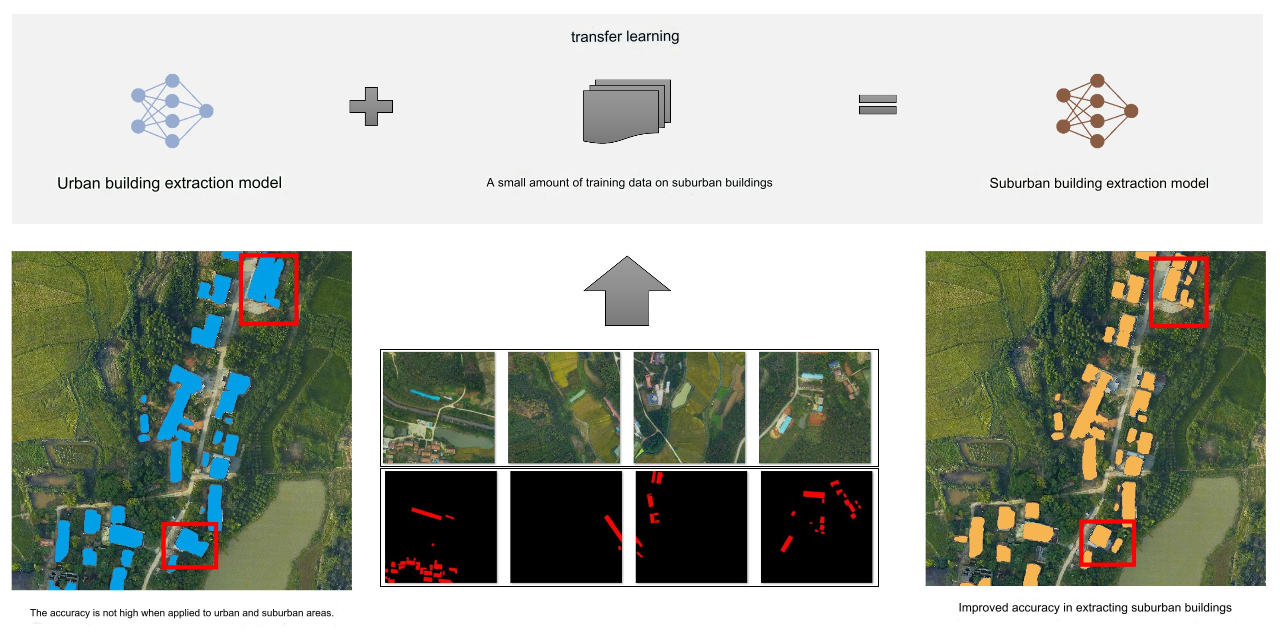

Transfer learning - improving the generalization of pre-trained models

pre-trained model focuses on common ground objects for remote sensing image interpretation, but in practical applications, there are often more specific needs. For example, the urban building extraction model currently provided has good extraction results for buildings with different shape characteristics and distribution characteristics in the urban background; however, there is still room for improvement in extraction accuracy for groups of small buildings in suburban areas. Faced with such application requirements, transfer learning can be used.

Transfer learning is a field of machine learning that applies knowledge gained from solving one problem to another similar problem. For the above examples, a small amount of suburban building training data can be used to fine-tune the parameters of the urban building extraction model through transfer learning to obtain a new suburban building extraction model. Compared to training a suburban building extraction model from scratch, transfer learning requires less training data, so new models that meet application needs can be quickly obtained.

▲Transfer learning process

The use of deep learning technology has greatly improved the efficiency of remote sensing image interpretation, and the data cost, time cost and computing power cost required for deep learning model training are also issues that cannot be ignored in practical applications. In order to reduce users’ costs, SuperMap provides pre-training models for intelligent interpretation of remote sensing images. In addition to the six commonly used models introduced in this article, in the future, SuperMap will continue to provide pre-training models for different tasks and different ground objects, and will also upgrade and optimize the existing models, hoping to help more users efficiently complete remote sensing image interpretation tasks.